The first step of building a predictive model is to choose period for predictors (independent variables) and target variable (dependent variable). For that we need to define the observation and performance period (window). It is important to spend enough time on this step of the predictive modeling project.

Factors in choosing Observation Window

2. Performance window depends on the model you are building. In other words, it depends on the definition of product. For example, performance window for customer attrition for savings product model would be different than performance window for Certificate of Deposit model.

Why Rolling Performance Window?

If you are building a campaign response model, campaign data of multiple periods should be considered.

Observation Period

It is the period from where independent variables /predictors come from. In other words, the independent variables are created considering this period (window) only.

Performance Period

It is the period from where dependent variable /target come from. It is the period following the observation window.

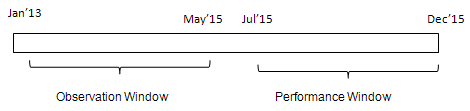

Example

Suppose you are developing a customer attrition model for retail bank customers. 'Customer attrition' means customers are leaving the bank. You have historical data from Jan'13 to Dec'15. To create independent variables / predictors, data from Jan'13 to May'15 would be used. Customers who attrited during July'15 - Dec'15 are considered as attritors (or events) in the model. One month lag between observation and performance window would be used as a period during which the population will be scored when implementing the model. You are still confused about the one month lag logic? Let's take an example. In the month of September 2016, you are asked to score the targeted population. You would take historical data till August 2016 and you would score the population in September and send it to the strategy or campaign team to act on the high risk customers based on the predictive model. They will design a campaign and forward it to the frontline team to contact customers via email or phone call. This whole process generally takes 1 month.

|

| Observation and Performance Window |

Factors in choosing Observation Window

1. Take into enough observations (cases) to develop a model.

2. Take into account any seasonal influences. For example, you are predicting revenue for the month of December which includes christmas period so it's important to include predictors for past years' december month as behavior of variables for December is significantly different than other months.

3. No fixed window for all the models. Depends on the type of model.

Factors in choosing Performance Window

1. Performance window should be long enough to have enough events. This can be checked with vintage analysis as explained below -

Initially take multiple length of the performance windows and calculate event rate against these periods. Select the period at which event rate stabilizes which means event rate does not increase much. It is called vintage analysis.

Initially take multiple length of the performance windows and calculate event rate against these periods. Select the period at which event rate stabilizes which means event rate does not increase much. It is called vintage analysis.

2. Performance window depends on the model you are building. In other words, it depends on the definition of product. For example, performance window for customer attrition for savings product model would be different than performance window for Certificate of Deposit model.

Typical Performance Window

1. In credit risk modeling, we generally set bad definition as 90+ days past due and look at it in performance window of next 12-24 months.

2. In telecom industry, bad customers are generally defined as 60+ days past due or no payment made and see it in performance window of 6-12 months.

Rolling Performance Window

1. In credit risk modeling, we generally set bad definition as 90+ days past due and look at it in performance window of next 12-24 months.

2. In telecom industry, bad customers are generally defined as 60+ days past due or no payment made and see it in performance window of 6-12 months.

Rolling Performance Window

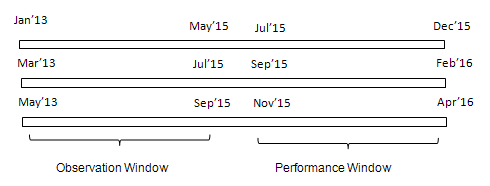

It implies taking multiple windows to build a model but the duration of performance window is fixed as shown in the image below.

|

| Rolling Performance Window |

Why Rolling Performance Window?

1. Seasonality

It is not always a case that the behavior of attributes of customers are constant. For example, the attrition rate of a particular period is 10%. In the other period, it may go up or down. There could be some seasonality related to it. When we take a single performance window, we assume that the variables are constant over time. When we take multiple performance window, we capture seasonality in the model.

2. Including Multiple Campaigns

Example : Campaign Response - Rolling Performance Windows

- Customers targeted in Jan 2015 for the home loan campaign–whether the customers have taken the loan from Feb 2015 to April 2015

- Customers targeted in Feb 2015 for the home loan campaign–whether the customers have taken the loan converted from March 2015 to May 2015

- Customers targeted in March 2015 for the home loan campaign–whether the customers have taken the loan from April 2015 to June 2015

Hi,

ReplyDeleteI am not clear why do we require a lag between observation and performance window. To test model we can use new data and verify. How the lag period is related to implementation of model?

Thanks,

Ankit

I have added more description about the one month lag logic. Hope it helps!

DeleteThnx.. it does

ReplyDeleteWhat is actually meant by 'scoring' here?

ReplyDeletescoring is nothing probability

DeleteHello!

ReplyDeleteThank you so much for a grate article.

I'm not clear about how specificly we apply the method with vintage analysis for choosing the performance window. Could you please add some more description of that, or some kind of example?

Thank you!

Hi,

ReplyDeleteBasically rolling performance window means building multiple models right? How do we combine all of them? Or should they be used independently? Thanks

No. Build one single model on the data comes from different performance window.

DeleteThank you, your article really helps me. If a model has already built by using 12 months as the performance window, does it mean that we must use 12 months to see whether the model can predict correctly or not?even the model has been used for 24 months. Or Otherwise we can use data of 24 months in seeing accuracy of the model. Thank you.

ReplyDelete'Initially take multiple length of the performance windows and calculate event rate against these periods. Select the period at which event rate stabilizes which means event rate does not increase much. It is called vintage analysis.' - Are only newly opened accounts as of that month considered for that specific cohort or all the accounts as of that month for the vintage analysis?

ReplyDeleteI am interested in vintage analysis using SAS.if you could share some code related to vintage analysis and roll rate analysis then that would be really appreciated.

ReplyDeleteHi Deepanshu, this is useful. What about employee attrition model and if we take 6 months prediction window? Can you help with some ideas?

ReplyDeleteHi @SB, In employee attrition model performance window should be less than 6 months because in 6 months a lot of thing changes for a person and there may be some other factor or variable which may impact the employee decision

ReplyDelete